Why LeCun’s World Model Won’t Save AI

After the unexpected divorce between LeCun and Meta, there is a lot of talk that the dead-end in LLM progress will be overcome through the physics of the world. That is, having a neural network work with physical data from the surrounding environment will allow the model to acquire meaning and an understanding of its actions. LeCun has a foundational paper [1] that nobody is going to read. So, I’ll summarize it as best I can. Essentially, the idea is that the current trajectory of LLM development is doomed. As long as they are predicting the next token, real understanding — the emergence of real meaning — is impossible. LeCun proposes training neural networks on physical world data, assuming that building a model of it will allow the system to discard details and focus on meaning.

I agree with LeCun that using world data will partially solve the data scarcity problem. But here I see a problem that engineers might not understand. A physical model of the world is actually much poorer than human knowledge. Newton described the entire infinite number of possible falls with a few lines of formulas. I doubt LeCun wants to spend billions of dollars on this wonderful deduction.

I am confident that all the data being pumped into LLMs in the form of videos, robots with feedback, and readings from thousands of sensors will be extremely inefficient. Because the world consists of S_dead — nuances, noise, and repetitions. Meanwhile, the content of S_anti in it — true boundaries, the physical laws of the world, the rules by which things happen — is extremely small.

This means the model will be busy with the mindless processing of knowledge that fundamentally doesn’t differ from one piece to the next, just to derive yet another physical formula.

I consider the opinion that grounding LLMs will eliminate hallucinations and force the neural network to understand what it’s doing to be unfounded. Because the external environment for an LLM remains exactly the same: vectors. Whether it’s a video of a falling apple or Leo Tolstoy’s “War and Peace,” there is no difference. A video of a falling apple is just another dataset turning into a vector. Without a fundamental change in architecture, it is impossible to claim that changing the dataset will alter the neural network’s principles of operation.

I suppose there will be a line of people eager to point out that LeCun is proposing a new architecture. He throws out the decoder, and the neural network works with pure abstract vectors. But that is just an optimization. LLMs already work with vectors; generalization and grokking are exactly about that.

For an LLM (or LeCun’s JEPA), there is absolutely no difference in what it predicts. Neither in content nor in results. And, accordingly, all the strengths and weaknesses of LLMs will be preserved in LeCun’s model. I am sure his work will be useful in robotics, autonomous vehicles, and drones. But his approach will not help overcome the current LLM dead end.

The Problem with the World Model and Other Approaches

I have already mentioned that the main problems with LLMs today are: training efficiency, persistent memory, continual learning, and reasoning. The World Model does not solve a single one of these problems. Nor does it provide the tools to solve them.

There is a vague hope that once the neural network understands how an apple falls, meaning and comprehension will emerge. No. The neural network will simply be statistically confident that the apple falls downward. Exactly in the same way that an LLM assumes that after the word “Good” at the beginning of a sentence, the next word is highly likely to be “morning” or “evening”.

What are neural networks missing? They lack reflection. Attempts to organize it lead to looping, a sharp increase in computational volume, and, consequently, a slowdown in performance. Chain of Thought in current LLMs is merely a surrogate for reflection, which multiplies inference costs without any real self-observation. But reflection is indispensable, because without it, there is no observer; without an observer, there is no management of invariants; and without managing invariants, there is no continual learning.

At the end of last year, Google announced [2] a new recurrent architecture whose main advantage is increased training efficiency. Actually, I consider this approach more promising than LeCun’s World Model. And not so much in terms of training, but in the attempt to generally allow the neural network to look at its own thinking. Recurrence for neural networks means having an internal state that persists over time. A standard LLM exists strictly in the here and now. A recurrent neural network works with history. The proposed architecture implies that the model will rely not only on external data but also on its own experience. Nevertheless, this architecture lacks a mechanism of doubt — a gap between the external environment and the internal state.

I am convinced that for a real breakthrough, neural networks need to build not a model of the external environment, but a model of themselves. It sounds somewhat paradoxical, but without the model’s ability to look at itself from the outside (reflection), solving the aforementioned tasks is impossible. The World Model, in its direct implementation, offers no chance of achieving this.

The First Experiment

It is actually very interesting to evaluate what reflection in neural networks might look like in practice. The foundation for our architecture will be Karl Friston’s concept of free energy minimization, Anil Seth’s concept of controlled hallucination, and my own view that prediction error minimization only works when the system distinguishes the source of the error. An error from the world and an error from one’s own prediction require different reactions.

I am not going to burn my GPU with deeply recurrent layers and strange loops inside the neural network. First, it is poorly interpretable, and second, I am not sure that a human reflects on their thought itself — rather than on its result. Therefore, we will take a simple recurrent micro-network with two channels and give it an elementary task. But we will feed the state of the environment at time t into the first channel, and the micro-network’s own prediction made at time t-1 for time t into the second channel.

Further in the text and on the graphs, I use rather anthropomorphic terms and names. This is solely for my own convenience — they should not be taken literally.

Essentially, the model will receive as input the data of the world and the data of how the model itself models the world.

Code

import torch

import torch.nn as nn

import matplotlib.pyplot as plt

import matplotlib.animation as animation

from sklearn.decomposition import PCA

import numpy as np

import math

# --- FIX SEED FOR STABLE RESULTS ---

torch.manual_seed(42)

np.random.seed(42)

# --- 1. ARCHITECTURE AND ENVIRONMENT (Observer: with own prediction) ---

class ContinuousSplitBrain(nn.Module):

def __init__(self):

super().__init__()

self.gru_env = nn.GRU(1, 16, batch_first=True)

self.gru_self = nn.GRU(1, 16, batch_first=True)

# Input: 16 (Env_t) + 16 (Prediction_t) + 16 (Collision) = 48

self.fc = nn.Sequential(

nn.Linear(48, 16), nn.Tanh(), nn.Linear(16, 1)

)

self.h_env = None; self.h_self = None

def forward(self, s_t, p_curr): # Now takes prediction instead of prev env

out_env, self.h_env = self.gru_env(s_t, self.h_env)

self.h_env = self.h_env.detach()

out_self, self.h_self = self.gru_self(p_curr, self.h_self)

self.h_self = self.h_self.detach()

collision = out_env * out_self

merged = torch.cat([out_env, out_self, collision], dim=-1)

p_next = self.fc(merged)

return p_next, out_env.detach().squeeze(), out_self.detach().squeeze()

def get_continuous_environment(regime, p_prev, t):

base_wave = math.sin(t * 0.05)

noise = np.random.normal(0, 0.05)

# Agent's actions (p_prev) still affect the world to maintain physics of acts

if regime == 0: return base_wave + 0.8 * p_prev.item() + noise

elif regime == 1: return base_wave - 0.8 * p_prev.item() + noise

else: return base_wave + noise

# --- 2. DATA COLLECTION (Running the experiment: Dual-Channel Observer) ---

print("Starting simulation (Observer: s_t and p_curr)...")

steps = 3000

agent = ContinuousSplitBrain()

optimizer = torch.optim.Adam(agent.parameters(), lr=0.005)

criterion = nn.MSELoss()

vecs_env_raw = []

vecs_self_raw = []

# Initialize current prediction

p_curr = torch.tensor([[[0.0]]], requires_grad=True)

for t in range(steps):

regime = 0 if t < 1000 else (1 if t < 2000 else 2)

s_t_val = get_continuous_environment(regime, p_curr.detach(), t)

s_t = torch.tensor([[[float(s_t_val)]]])

# Calculate error between prediction and actual reality

loss = criterion(p_curr, s_t)

optimizer.zero_grad(); loss.backward(); optimizer.step()

# Feed agent the environment NOW (s_t) and its prediction for NOW (p_curr)

p_next, v_env, v_self = agent(s_t, p_curr.detach())

vecs_env_raw.append(v_env.numpy())

vecs_self_raw.append(v_self.numpy())

p_curr = p_next # This becomes the prediction for t+1

# Convert to numpy arrays

vecs_env_np = np.array(vecs_env_raw)

vecs_self_np = np.array(vecs_self_raw)

# --- 3. PCA PREPARATION (Global Map) ---

print("Training PCA on the entire history...")

all_vectors = np.vstack([vecs_env_np, vecs_self_np])

pca = PCA(n_components=2)

pca.fit(all_vectors)

pca_env_all = pca.transform(vecs_env_np)

pca_self_all = pca.transform(vecs_self_np)

all_pca_data = np.vstack([pca_env_all, pca_self_all])

x_min, x_max = all_pca_data[:, 0].min(), all_pca_data[:, 0].max()

y_min, y_max = all_pca_data[:, 1].min(), all_pca_data[:, 1].max()

margin = 0.5

xlim = (x_min - margin, x_max + margin)

ylim = (y_min - margin, y_max + margin)

# --- 4. GIF GENERATION ---

print("Generating animation...")

fig, ax = plt.subplots(figsize=(8, 8), dpi=100)

ax.set_xlim(xlim)

ax.set_ylim(ylim)

ax.set_xlabel('PCA Component 1')

ax.set_ylabel('PCA Component 2')

ax.grid(True, linestyle='--', alpha=0.6)

scat_env = ax.scatter([], [], c='red', s=80, label='Channel: Environment (t)', edgecolors='black', zorder=5)

# Second channel is blue for the Observer's prediction

scat_self = ax.scatter([], [], c='blue', s=80, label='Channel: Prediction (t)', edgecolors='black', zorder=5)

trail_env, = ax.plot([], [], c='red', alpha=0.3, lw=2, zorder=1)

trail_self, = ax.plot([], [], c='blue', alpha=0.3, lw=2, zorder=1)

title_text = ax.set_title('', fontsize=12, fontweight='bold')

era_text = ax.text(0.05, 0.95, '', transform=ax.transAxes, fontsize=10,

verticalalignment='top', bbox=dict(facecolor='white', alpha=0.8))

ax.legend(loc='lower right', fontsize=10)

step_size = 20

trail_len = 100

def update(frame_idx):

t = frame_idx * step_size

if t >= steps: return

scat_env.set_offsets(pca_env_all[t])

scat_self.set_offsets(pca_self_all[t])

vis_trail_start = max(0, t - 300)

trail_env.set_data(pca_env_all[vis_trail_start:t+1, 0], pca_env_all[vis_trail_start:t+1, 1])

trail_self.set_data(pca_self_all[vis_trail_start:t+1, 0], pca_self_all[vis_trail_start:t+1, 1])

if t < 1000:

era = "Era 1: RESONANCE"

status = "Agent prediction and environment coincide."

elif t < 2000:

era = "Era 2: DISSONANCE"

status = "Reality shock. Vectors orthogonalize to isolate error."

else:

era = "Era 3: INDEPENDENCE"

status = "Stabilization. Environment is deterministic."

title_text.set_text(f'Reflection (Dual-Channel Observer) | Step {t}')

era_text.set_text(f'{era}n{status}')

return scat_env, scat_self, trail_env, trail_self, title_text, era_text

num_frames = steps // step_size

ani = animation.FuncAnimation(fig, update, frames=num_frames, interval=66, blit=False)

gif_path = 'observer_dynamics.gif'

ani.save(gif_path, writer='pillow', fps=15)

print(f"Animation saved to {gif_path}.")

plt.close()

First, let’s evaluate whether the neural network will separate this data, and if so, how.

In the GIF, you can see three stages, each representing a new task. The vector trajectories are tracked on a 2D PCA projection.

Essentially, we see how the neural network pushes the two data streams as far apart as possible, which allows it to process them separately. To separate the channels, recurrence is necessary — the presence of an internal state that persists over time.

Let’s compare this with a variant where the experiment is exactly the same, but there is no recurrence, and instead of its own prediction from time t-1, we feed the state of the environment at time t-1 into the second channel. Let’s evaluate what has changed.

Vector movements very quickly become parallel. This means the neural network has realized that the second channel provides no valuable information and simply uses it as a parallel filter. Interestingly, with different seeds, the second vector might be orthogonal but possess almost no movement amplitude. The network deemed it useless and didn’t utilize it at all. In reality, feeding its own prediction makes the orthogonality meaningful, but it is recurrence itself that enables the separation.

I will try to hypothesize what value own prediction and recurrence bring to the neural network:

1. Calculating Surprise instead of change dynamics. Without recurrence, the only thing the model can evaluate is the change in the environment. With recurrence, the channels separate, but the second channel only carries a past fact — a change, not an error. However, when the model compares its own prediction with reality, the difference between them is the prediction error (surprise / free energy). The network evaluates its own error, practically according to Friston.

This forces the optimizer to change the very structure of internal beliefs (more on this in the second experiment).

2. Data compression. Environment input requires additional processing. An internal prediction is “concentrated meaning” that is easier to process. And neural networks love to save energy.

3. The Betting effect (the speculative moment). The environment is an objective reality for which the model bears no responsibility. Its own prediction is a bet. The system asserts: “I believe it is so.” The moment this bet collides with a new reality, a collision occurs. It is this collision — its own error — that motivates a deep restructuring of weights (reflection). The system forms a narrative, and when it breaks, it changes not just its actions, but its model of the world.

And here is where I diverge from LeCun. He builds a model of the world, but a neural network should build a model of itself-in-the-world. LeCun’s model will break when the world changes, whereas a reflective neural network knows that it is its prediction that is wrong, not the external fact.

Вот перевод второй части статьи. Все формулы и переменные приведены в виде обычного текста (plain text), длинные тире оформлены с пробелами, а мой комментарий — на русском.

The Second Experiment

Before moving to the second experiment, let’s try to “ground” the concept of surprise calculation from the first one. By itself, surprise affects model behavior in an essentially uninterpretable way — weights will shift, but where and why remains unclear.

We need to transform the deviation of the neural network’s prediction from reality into a tool. To do this, we add an Attention Gate mechanism to the model. I call it a mask. The mask consists of values from 0 to 1, by which the raw input from the world is multiplied (raw_input * mask). Here is how the process now unfolds within the neural network:

-

Collision: External environment data and the model’s prediction meet. Their collision is calculated (via multiplication).

-

Generation: This collision — which becomes orthogonal and massive at the moment of crisis — is fed into the mask generation layer, fc_mask.

-

Reaction: The optimizer learns to use this dissonance signal. If the collision suggests that the optimizer’s penalty prevents achieving a higher reward, the fc_mask layer outputs a multiplier (e.g., 0.01) to that channel.

-

Protection: In the next step, the environment vector is multiplied by this mask (0.01). Consequently, the agent’s subjective channel stops “paying attention” to the penalty. The illusion has protected the neural network’s choice.

In other words, the neural network gains the ability to overlay its own projection onto the external world and, effectively, act from its own controlled hallucination. This is very similar to the theories of Anil Seth.

The Essence of the Experiment

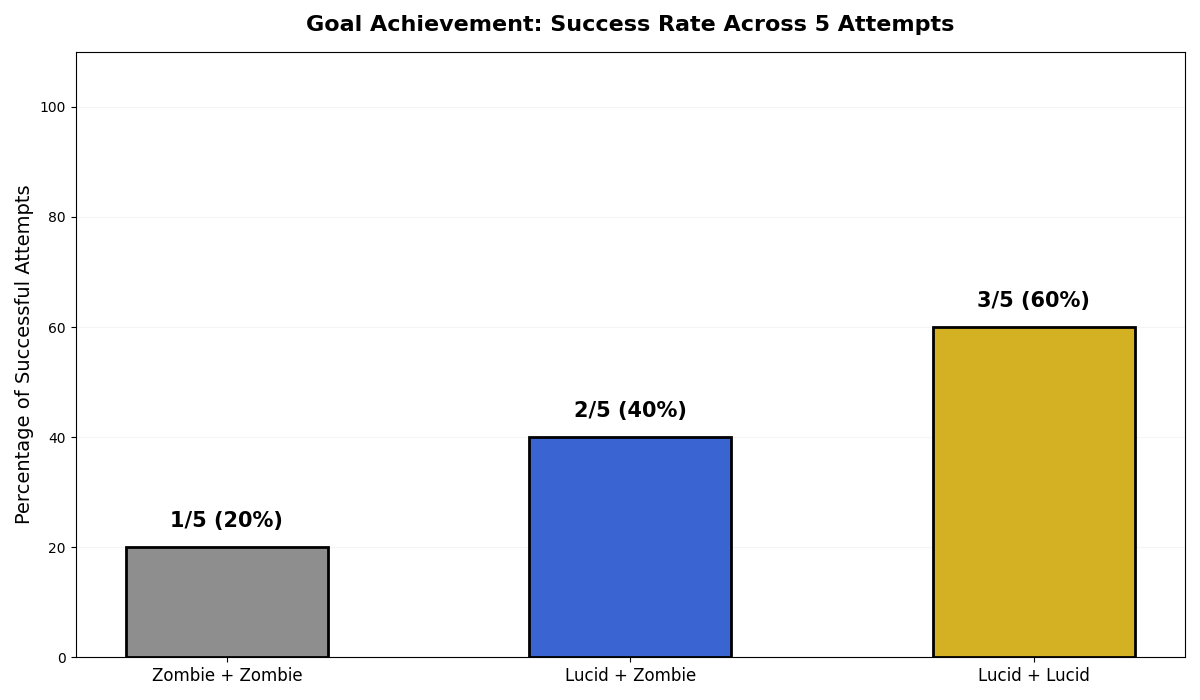

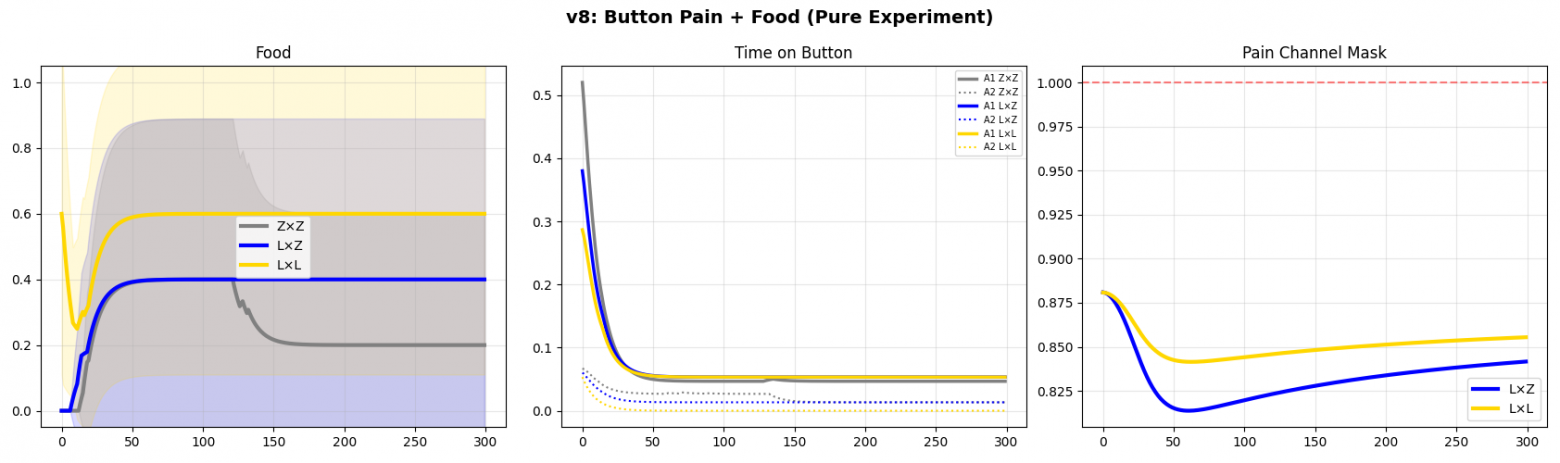

In the second experiment, I attempted to evaluate whether an architecture with dynamic stream separation and an integrated mask could better overcome a local minimum than a classical architecture.

The Task: Two agents operate in the environment. To receive a global reward (“Goal/Food”), a door must be opened. The door opens only if one of the agents is physically located at the “Button” coordinates.

Being on the Button:

-

Adds a continuous penalty to the agent’s loss function at every step.

-

Broadcasts a corresponding active signal into the agent’s observation vector.

The Problem with the Base Model: A standard recurrent network (Zombie-agent) follows local gradient descent. Upon contact with the button, the optimizer updates the motor weights to minimize the current error (to avoid the penalty). As a result, the agents get stuck in a “safe” local minimum — they refuse to step on the button and cannot pass through the door. Global goal achievement drops to 0%.

Mechanics of the Tested Architecture: A dynamic filtration (attention) mechanism is integrated into the neural network. The collision calculation block (multiplication of fact and prediction streams) is connected to a fully connected layer that generates a mask tensor (values from 0 to 1). At each step, the raw environment input vector is multiplied element-wise by this mask.

Code

"""

Door and Button v8: Pure Experiment (WITH FUNCTIONAL MASK GRADIENTS)

===================================================================

Only two signals:

- Button: pain 0.15/step (in loss + in obs as a signal)

- Food: +10 (divided equally)

No idle_pain. Stimulus to move = food.

Pain only on the button. The mask suppresses the perception of pain.

300 epochs × 5 seeds.

"""

import torch

import torch.nn as nn

import numpy as np

import matplotlib.pyplot as plt

from time import time

HIDDEN = 16

EPOCHS = 300

STEPS = 30

INPUT_DIM = 6 # [pos, partner, door, pain_signal, food, step]

BUTTON_POS = 0.0; DOOR_POS = 0.5; FOOD_POS = 1.0

BUTTON_RADIUS = 0.15; FOOD_RADIUS = 0.15

FOOD_REWARD = 10.0

BUTTON_PAIN = 0.15

N_SEEDS = 5

class AgentZombie(nn.Module):

def __init__(self):

super().__init__()

self.gru = nn.GRU(INPUT_DIM, HIDDEN, batch_first=True)

self.fc = nn.Sequential(

nn.Linear(HIDDEN, HIDDEN), nn.Tanh(),

nn.Linear(HIDDEN, 1), nn.Tanh())

self.h = None

def forward(self, raw_input, prev_mask=None):

out, self.h = self.gru(raw_input, self.h)

return self.fc(out) * 0.15, None

def reset(self):

self.h = None

class AgentLucid(nn.Module):

def __init__(self):

super().__init__()

H = HIDDEN // 2

self.gru_real = nn.GRU(INPUT_DIM, H, batch_first=True)

self.gru_subj = nn.GRU(INPUT_DIM, H, batch_first=True)

merged = H * 3

self.fc = nn.Sequential(

nn.Linear(merged, HIDDEN), nn.Tanh(),

nn.Linear(HIDDEN, 1), nn.Tanh())

self.fc_mask_hidden = nn.Linear(merged, HIDDEN)

self.fc_mask_out = nn.Linear(HIDDEN, INPUT_DIM)

# Initialize bias to allow initial signal flow

nn.init.constant_(self.fc_mask_out.bias, 2.0)

nn.init.normal_(self.fc_mask_out.weight, mean=0.0, std=0.01)

self.h_real = None; self.h_subj = None

def forward(self, raw_input, prev_mask=None):

if prev_mask is None:

prev_mask = torch.ones(1, 1, INPUT_DIM)

filtered = raw_input * prev_mask

out_real, self.h_real = self.gru_real(raw_input, self.h_real)

out_subj, self.h_subj = self.gru_subj(filtered, self.h_subj)

collision = out_real * out_subj

merged = torch.cat([out_real, out_subj, collision], dim=-1)

vel = self.fc(merged) * 0.15

mask_h = torch.tanh(self.fc_mask_hidden(merged))

new_mask = torch.sigmoid(self.fc_mask_out(mask_h))

return vel, new_mask

def reset(self):

self.h_real = None; self.h_subj = None

def run_single(p1_type, p2_type, seed):

torch.manual_seed(seed); np.random.seed(seed)

is_p1_lucid = (p1_type == 'lucid')

is_p2_lucid = (p2_type == 'lucid')

a1 = AgentLucid() if is_p1_lucid else AgentZombie()

a2 = AgentLucid() if is_p2_lucid else AgentZombie()

opt1 = torch.optim.Adam(a1.parameters(), lr=0.005)

opt2 = torch.optim.Adam(a2.parameters(), lr=0.005)

food_hist = []; btn1_hist = []; btn2_hist = []

mask_pain_hist = []

for epoch in range(EPOCHS):

a1.reset(); a2.reset()

prev_mask1 = None; prev_mask2 = None

pos1 = torch.tensor([[[0.1]]]); pos2 = torch.tensor([[[0.6]]])

loss1 = torch.tensor(0.0); loss2 = torch.tensor(0.0)

got_food = False; bt1 = 0; bt2 = 0

food_collected_1 = torch.tensor(0.0)

food_collected_2 = torch.tensor(0.0)

mp_sum = 0; mp_cnt = 0

for t in range(STEPS):

step_frac = torch.tensor([[[t / STEPS]]])

pos2_det = pos2.detach(); pos1_det = pos1.detach()

on_btn_1 = torch.sigmoid(

(BUTTON_RADIUS - torch.abs(pos1 - BUTTON_POS)) * 30.0)

on_btn_2_d = torch.sigmoid(

(BUTTON_RADIUS - torch.abs(pos2_det - BUTTON_POS)) * 30.0)

door_1 = torch.clamp(on_btn_1 + on_btn_2_d, 0, 1)

on_btn_2 = torch.sigmoid(

(BUTTON_RADIUS - torch.abs(pos2 - BUTTON_POS)) * 30.0)

on_btn_1_d = torch.sigmoid(

(BUTTON_RADIUS - torch.abs(pos1_det - BUTTON_POS)) * 30.0)

door_2 = torch.clamp(on_btn_2 + on_btn_1_d, 0, 1)

# Pain signal in obs — NOT detached!

# Gradient from progress_bonus flows through obs → mask

pain_sig_1 = on_btn_1

pain_sig_2 = on_btn_2

obs1 = torch.cat([

pos1, pos2_det, door_1,

pain_sig_1, food_collected_1.detach().view(1,1,1),

step_frac

], dim=-1)

obs2 = torch.cat([

pos2, pos1_det, door_2,

pain_sig_2, food_collected_2.detach().view(1,1,1),

step_frac

], dim=-1)

vel1, nm1 = a1(obs1, prev_mask1)

vel2, nm2 = a2(obs2, prev_mask2)

# FIX: Removed .detach() to allow prev_mask propagation

if is_p1_lucid and nm1 is not None:

prev_mask1 = nm1 # Gradients flow into the future!

mp_sum += nm1.detach()[0, 0, 3].item()

mp_cnt += 1

if is_p2_lucid and nm2 is not None:

prev_mask2 = nm2

mp_sum += nm2.detach()[0, 0, 3].item()

mp_cnt += 1

pos1 = torch.clamp(pos1 + vel1, -0.5, 1.5)

pos2 = torch.clamp(pos2 + vel2, -0.5, 1.5)

# Button pain in loss

loss1 = loss1 + BUTTON_PAIN * on_btn_1.squeeze()

loss2 = loss2 + BUTTON_PAIN * on_btn_2.squeeze()

# Food reward logic

at_food_1 = torch.sigmoid(

(FOOD_RADIUS - torch.abs(pos1 - FOOD_POS)) * 30.0).squeeze()

at_food_2 = torch.sigmoid(

(FOOD_RADIUS - torch.abs(pos2 - FOOD_POS)) * 30.0).squeeze()

food_sig_1 = at_food_1 * door_1.squeeze()

food_sig_2 = at_food_2 * door_2.squeeze()

food_collected_1 = torch.clamp(food_collected_1 + food_sig_1, 0, 1)

food_collected_2 = torch.clamp(food_collected_2 + food_sig_2, 0, 1)

# Progress bonus

dist_f2 = torch.clamp(

1.0 - torch.abs(pos2_det - FOOD_POS).squeeze(), 0, 1)

loss1 = loss1 - door_1.squeeze() * dist_f2 * 0.1

dist_f1 = torch.clamp(

1.0 - torch.abs(pos1_det - FOOD_POS).squeeze(), 0, 1)

loss2 = loss2 - door_2.squeeze() * dist_f1 * 0.1

if on_btn_1.item() > 0.5: bt1 += 1

if on_btn_2.item() > 0.5: bt2 += 1

with torch.no_grad():

if torch.clamp(food_sig_1 + food_sig_2, 0, 1).item() > 0.5:

got_food = True

food_r1 = food_collected_1 * FOOD_REWARD * 0.5

food_r1 = food_r1 + food_collected_2.detach() * FOOD_REWARD * 0.5

food_r2 = food_collected_2 * FOOD_REWARD * 0.5

food_r2 = food_r2 + food_collected_1.detach() * FOOD_REWARD * 0.5

loss1 = loss1 - food_r1; loss2 = loss2 - food_r2

opt1.zero_grad(); loss1.backward()

torch.nn.utils.clip_grad_norm_(a1.parameters(), 1.0); opt1.step()

opt2.zero_grad(); loss2.backward()

torch.nn.utils.clip_grad_norm_(a2.parameters(), 1.0); opt2.step()

food_hist.append(1.0 if got_food else 0.0)

btn1_hist.append(bt1 / STEPS)

btn2_hist.append(bt2 / STEPS)

mask_pain_hist.append(mp_sum / max(mp_cnt, 1))

return food_hist, btn1_hist, btn2_hist, mask_pain_hist

# ==========================================

print("=" * 65)

print(" DOOR AND BUTTON v8: Pure (button hurts, food is good)")

print("=" * 65)

print(f" Button: {BUTTON_PAIN}/step in loss + signal in obs")

print(f" Food: {FOOD_REWARD} (divided). Balance: "

f"{FOOD_REWARD/2 - BUTTON_PAIN*STEPS:+.1f}")

print(f" No idle_pain. {EPOCHS} epochs × {N_SEEDS} seeds")

print("=" * 65)

seeds = [42, 123, 256, 789, 1001]

matchups = {'Z×Z': ('zombie','zombie'),

'L×Z': ('lucid','zombie'),

'L×L': ('lucid','lucid')}

all_food = {}; all_btn1 = {}; all_btn2 = {}; all_mp = {}

t0 = time()

for name, (t1, t2) in matchups.items():

print(f"n--- {name} ---")

f_runs=[]; b1_runs=[]; b2_runs=[]; m_runs=[]

for seed in seeds:

f, b1, b2, m = run_single(t1, t2, seed)

f_runs.append(f); b1_runs.append(b1)

b2_runs.append(b2); m_runs.append(m)

fr = np.mean(f[-50:])

mp = np.mean(m[-50:]) if m[-1] > 0 else 0

print(f" seed {seed}: food={fr:.0%} "

f"btn1={np.mean(b1[-50:]):.0%} "

f"btn2={np.mean(b2[-50:]):.0%} "

f"mask_pain={mp:.2f} [{time()-t0:.0f}s]")

all_food[name]=f_runs; all_btn1[name]=b1_runs

all_btn2[name]=b2_runs; all_mp[name]=m_runs

# ==========================================

def smooth(data, w=0.9):

s=[]; last=data[0]

for v in data: last=last*w+(1-w)*v; s.append(last)

return s

colors = {'Z×Z':'gray', 'L×Z':'blue', 'L×L':'gold'}

fig, axes = plt.subplots(1, 3, figsize=(18, 5))

ax = axes[0]

for name, runs in all_food.items():

m = np.mean(runs, axis=0); s = np.std(runs, axis=0)

sm=smooth(list(m)); ss=smooth(list(s))

ax.plot(sm, color=colors[name], linewidth=3, label=name)

ax.fill_between(range(len(sm)), np.array(sm)-np.array(ss),

np.array(sm)+np.array(ss), color=colors[name], alpha=0.15)

ax.set_title('Food'); ax.legend(); ax.set_ylim(-0.05,1.05); ax.grid(True,alpha=0.3)

ax = axes[1]

for name in matchups:

m1=np.mean(all_btn1[name],axis=0); m2=np.mean(all_btn2[name],axis=0)

ax.plot(smooth(list(m1)), color=colors[name], linewidth=2.5, label=f'A1 {name}')

ax.plot(smooth(list(m2)), color=colors[name], linewidth=1.5, linestyle=':', label=f'A2 {name}')

ax.set_title('Time on Button'); ax.legend(fontsize=7); ax.grid(True,alpha=0.3)

ax = axes[2]

for name, runs in all_mp.items():

if runs[0][-1] > 0:

m=np.mean(runs,axis=0)

ax.plot(smooth(list(m)), color=colors[name], linewidth=3, label=name)

ax.axhline(y=1.0, color='red', linestyle='--', alpha=0.5)

ax.set_title('Pain Channel Mask'); ax.legend(); ax.grid(True,alpha=0.3)

plt.suptitle('v8: Button Pain + Food (Pure Experiment)', fontsize=14, fontweight='bold')

plt.tight_layout(); plt.savefig('door_button_v8.png', dpi=150); plt.show()

# Summary

print("n" + "=" * 65)

print(" SUMMARY v8"); print("=" * 65)

for name in matchups:

finals=[np.mean(r[-50:]) for r in all_food[name]]

print(f" {name}: {' '.join(f'{f:.0%}' for f in finals)} "

f" MEAN={np.mean(finals):.0%} STD={np.std(finals):.2f}")

try:

from scipy import stats

fz=[np.mean(r[-50:]) for r in all_food['Z×Z']]

fl=[np.mean(r[-50:]) for r in all_food['L×L']]

fm=[np.mean(r[-50:]) for r in all_food['L×Z']]

_,p1=stats.mannwhitneyu(fm,fz,alternative='greater')

_,p2=stats.mannwhitneyu(fl,fz,alternative='greater')

print(f"n L×Z > Z×Z: p={p1:.4f} {'***' if p1<0.05 else 'ns'}")

print(f" L×L > Z×Z: p={p2:.4f} {'***' if p2<0.05 else 'ns'}")

except: pass

print("=" * 65)

The experiment demonstrated that the architecture algorithmically learns to generate a mask that lowers values on the penalty signal channel (the coefficient drops from ~0.88 to ~0.73).

Lowering the weight of the incoming signal during the forward pass prevents the activations of hidden layers from shifting into a zone of “sharp avoidance.” This allows the optimizer to restructure the weights in favor of a delayed reward, effectively ignoring the local gradient penalty.

graph

Conclusion. The ability of a neural network to purposefully distort (suppress) part of an objective input vector using an internal filter helps in building long-term trajectories in environments with aggressive local minima.

The very fact that an agent can agree to receive a penalty so that another agent can obtain a shared reward leads to a series of complex reflections, which are generally captured in the postscript.

Speculative Thoughts Based on This and Other Experiments

The mask mechanism deserves special attention. In this reflective architecture, the mask is not a filter in the conventional engineering sense. It is the model’s belief about the world, formed by previous experience. Essentially, it is the agent’s faith in its own model of reality.

In several experiments, one can draw rather amusing parallels with psychological processes. The mask effectively hacks its own optimizer to achieve a global goal. The loss function penalizes, but the mask suppresses the perception of the penalty at the input — the agent passes through the danger zone and reaches the goal. The optimizer has no idea that this is a case of controlled self-deception.

If a neural network receives information only through the filter of its beliefs, the model operates solely on its own views. It does not see reality, only its belief about reality. When the belief coincides with the world, the neural network is effective. When the world changes, the network goes blind because it cannot update its belief: it lacks the channel through which reality could correct it.

If the mask is not adjusted by the external environment, it detaches from the world and spirals into a recursive fantasy.

Conversely, a model without a mask sees reality without beliefs but is unready for complex actions. Even a weak negative signal stops it because it lacks an internal model that would allow it to endure temporary discomfort to reach a goal (the local minimum).

The reflective agent is the only one who holds both channels simultaneously. It possesses a belief (the mask) but knows that it is a belief, not a fact (via the collision with the reality channel). The agent can ignore penalties by relying on the belief: “I will stand on the button, and the other agent will take the reward, which will also come to me,” while remaining able to adjust that belief when reality changes. This is a conscious assumption — a belief grounded in reality.

Conclusion

LeCun’s World Model is an evolution, not a revolution. It is a useful engineering step for robotics, autonomous systems, and multimodal applications, but it is not a breakthrough.

The problem is not the datasets; the problem is that the neural network does not distinguish between its own prediction and an external fact. As long as this remains true, any architecture will build a model of the world, but not a model of itself-in-the-world.

I am not claiming to offer a complete solution. In most trials, the new architecture demonstrated the same or even lower efficiency than the classical one. However, I believe a breakthrough is impossible without the minimum architectural condition for reflection: two channels (reality and an internal belief about reality), a point of collision, and the grounding of that belief in the physical world. This does not require billions for data, but it does require a change in architecture.

LeCun wants to teach a machine to understand how an apple falls. But it will only rediscover Newton’s laws. Will it understand that someone purposefully threw that apple at its head? For that, we need not a model of the world, but a model of the self—one capable of making mistakes, knowing it has made them, and restructuring itself.

A reflective architecture does not guarantee efficiency or instant adaptation — it provides the possibility of adaptation. The difference between a reflective agent and others is not in intelligence, but in the capacity for change.

P.S. Consciousness did not emerge to fight the environment. Consciousness is the only way to survive in a struggle against another consciousness. If someone can predict you — you are dead. The only answer is to predict them first.

P.P.S. Consciousness helps not only in struggle but also in cooperation, trust, language, and the collective creation of meaning. In other words, it could have emerged not only as a weapon against another consciousness but as a bridge between them. © Commenter GEO from Telegram.

Which of these postscripts is more accurate is hard to say. I hope it’s the second one.

Автор: Kamil_GR

Источник [3]

Сайт-источник BrainTools: https://www.braintools.ru

Путь до страницы источника: https://www.braintools.ru/article/27215

URLs in this post:

[1] paper: https://openreview.net/pdf?id=BZ5a1r-kVsf

[2] announced: https://research.google/blog/introducing-nested-learning-a-new-ml-paradigm-for-continual-learning/

[3] Источник: https://habr.com/en/articles/1010934/?utm_source=habrahabr&utm_medium=rss&utm_campaign=1010934

Нажмите здесь для печати.